March 13th-17th, 2017

For the First Time

SEMI-THERM 33 will feature Data Center Track, an entire day dedicated to addressing the impact of thermal design of IT equipment on the performance of the modern data center.

SEMI-THERM and AFCOM have come together to address the engineering impact of this gap on the performance of the data center. By bringing together the worlds of IT thermal design and operational planning, Data Center Track hopes to start a conversation about the impact of this divide, and how to move forward.

Who Will Be There?

Bahgat Sammakia, PhD |

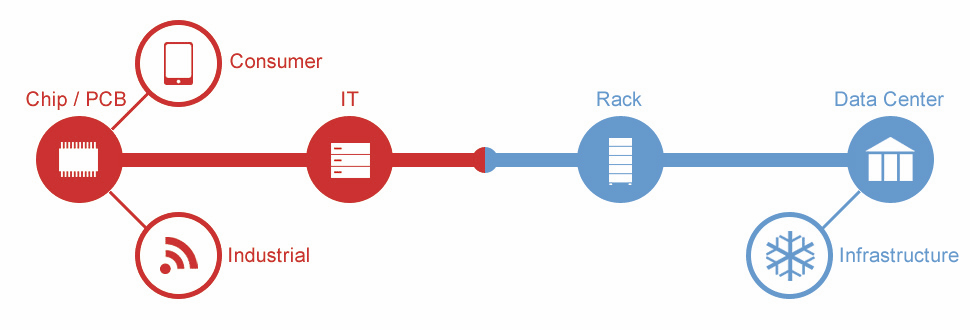

A Holistic View of a Fragmented Data Center IndustryThe entire data center industry is now changing thanks to consolidation, cost control, and cloud support. The new model is based on improving efficiency through a holistic design that combines workload allocation, thermal management, reliability and availability into an expert system that intelligently predicts and manages itself by sensing its surroundings and its effect on the environment. Increased demands due to the rise of exciting applications, the internet of things, and new tech on the horizon will inevitably lead to additional changes, including new thermal management approaches that are increasingly efficient and inexpensive. Until recently, more than half of the energy used in a data centers did not participate in any computational activities, but rather was wasted due to inefficient energy usage and poor thermal management. There has also been a gradual shift from an infrastructure, hardware, and software ownership model towards a subscription and capacity on-demand model. In an effort to support application demands, especially through the cloud, today’s data center capabilities must rise up to match consumer demand.

|

Jonathan G. Koomey, PHD |

A Decade of Data Center Efficiency: What’s Past is Prologue!In 2006, EPA’s ENERGY STAR group brought the information technology industry together twice to discuss energy efficiency in data centers. Electricity use of these facilities had doubled in the preceding 5 years, and most folks in the industry knew something had to be done. Since then, server manufacturers have redesigned their devices to reduce power use when idle, highly efficient data center modules have become commonplace, virtualization of workloads is routine, efficiency metrics are in widespread use, cloud computing is a well-known term, and the most sophisticated owner operators use engineering simulation to better manage design and operation of their facilities. The results have been dramatic. Total electricity used by data centers in the US has grown little since 2007, even as delivery of computing services continues to explode. However, most enterprise data centers operate at far lower efficiencies than their cloud computing counterparts, which makes them much more expensive to own and operate. Surprisingly, the solutions are now impeded primarily by management problems, not technology. Senior management routinely fails to understand the tight link between better energy performance and better business performance. Once that changes, the pace of efficiency improvements can proceed even more rapidly. This talk will review these historical developments and describe how industry can do even better in coming years.

|

What Topics Will Be Covered?

|

Who Is Sponsoring This Event?

|

|

|